- Chipsets & Processors

- Data Center Chip Market

Data Center Chip Market Size, Share, and Growth Forecast 2026 - 2033

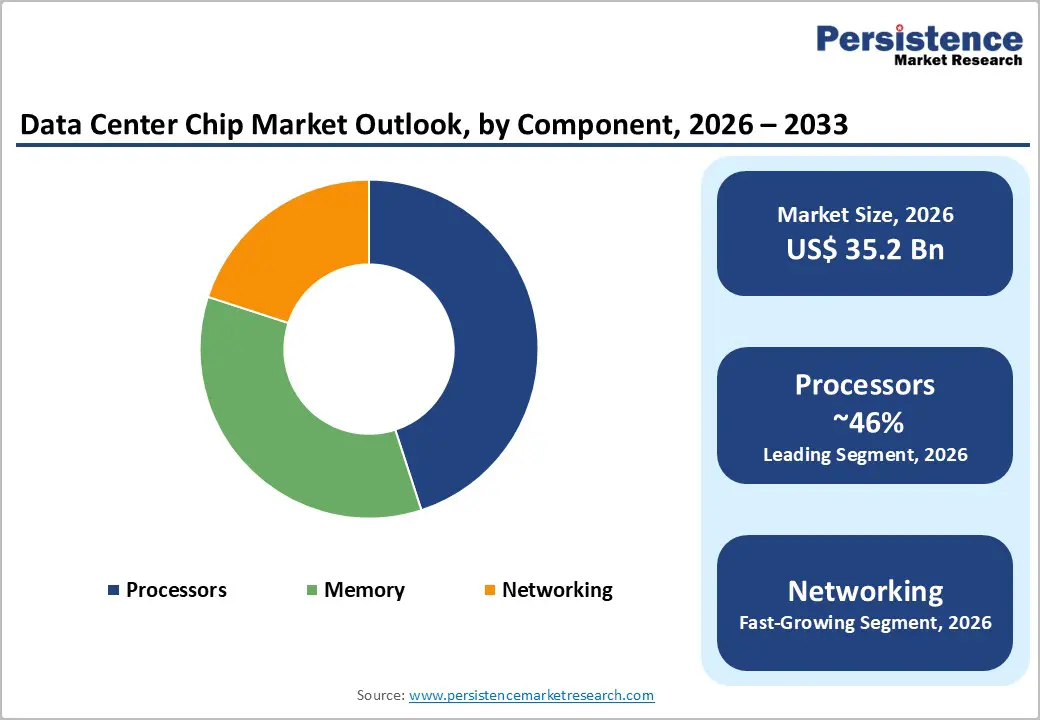

Data Center Chip Market by Component (Processors: Central Processing Units, Graphics Processing Units, Field-Programmable Gate Arrays, Application-Specific Integrated Circuits, Others; Memory: High Bandwidth Memory, Double Data Rate; Networking: Network Interface Cards and Network Adapters, Interconnects), Data Center Size (Small and Medium-Sized Data Centers, Large Data Centers), End-use (BFSI, Healthcare, Automotive, Media and Entertainment, IT and Telecom, Retail and E-commerce, Government, Others), and Regional Analysis for 2026 - 2033

Data Center Chip Market Size and Trend Analysis

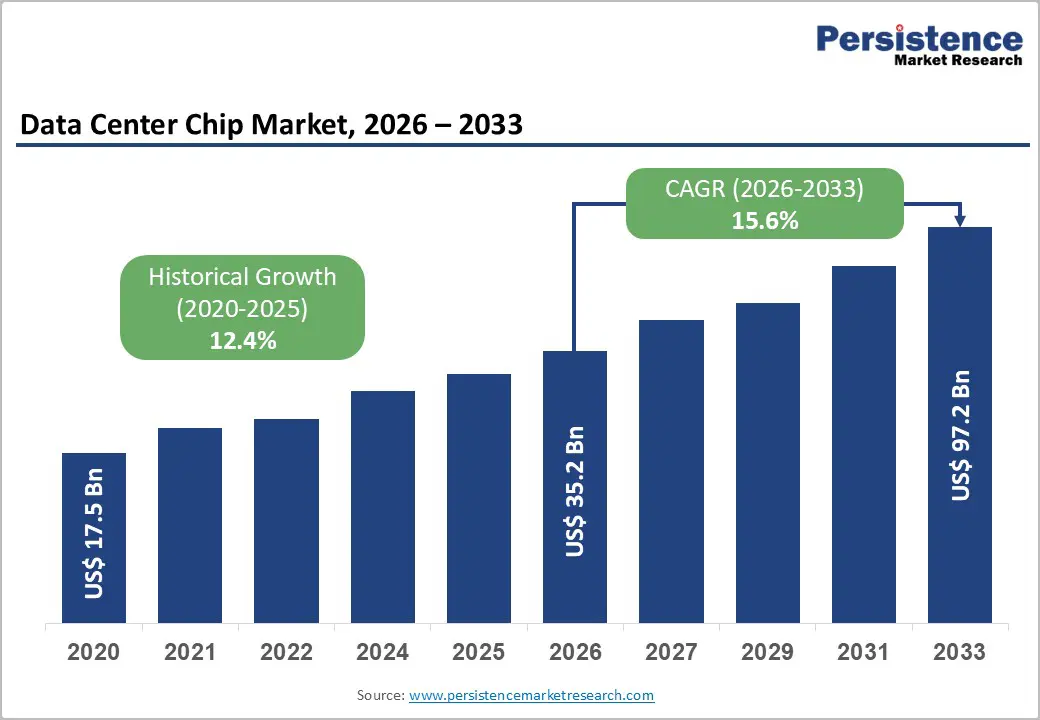

The global data center chip Market size is supposed to be valued at US$ 35.2 billion in 2026 and is projected to reach US$ 97.1 billion by 2033, growing at a CAGR of 15.6% between 2026 and 2033. This exceptional growth trajectory is powered by the structural shift of enterprise and cloud workloads toward artificial intelligence, large language model training, and high-performance inference, which require purpose-built, highly parallel semiconductor architectures that conventional processors cannot cost-effectively deliver.

Key Industry Highlights:

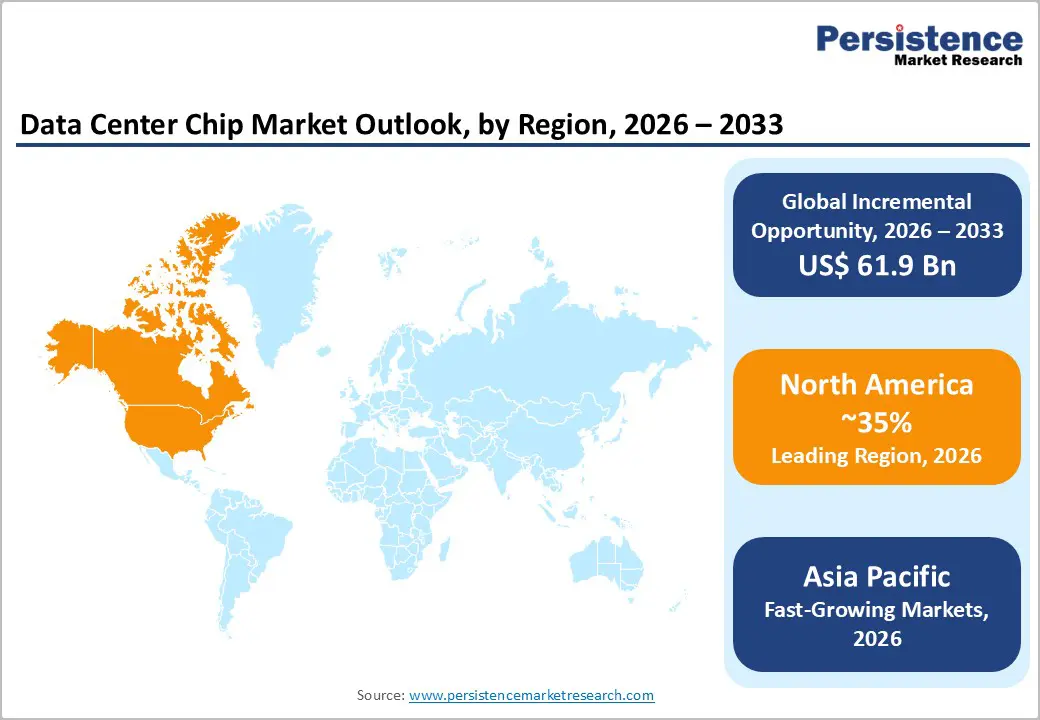

- Leading Region: North America leads the global data center chip market, with the US CHIPS Act committing US$ 52.7 billion to domestic semiconductor manufacturing and hyperscalers deploying over US$ 390 billion in AI data center capital expenditure during 2025, anchoring global chip procurement.

- Fastest Growing Region: Asia Pacific is the fastest-growing region, attracting over US$ 150 billion in AI data center investment in 2025, with Samsung and SK Hynix expanding HBM capacity by up to 50% and India emerging as the fastest-growing individual market.

- Dominant Segment: GPUs lead the Component category with approximately 57% market share, generating US$ 28.5 billion 87.6% of AI chipset quarterly sales in Q4 2024 driven by their unmatched parallel compute for AI training and inferencing workloads.

- Fastest Growing Segment: AI ASICs are the fastest-growing component type, with Omdia forecasting AI ASIC revenue to reach US$ 84.5 billion by 2030 as Google, Amazon, and Microsoft scale proprietary silicon deployments across hyperscale data centers.

- Key Market Opportunity: Custom ASIC development for hyperscalers and healthcare AI infrastructure both represent high-margin, structurally durable demand channels, with healthcare IT projected to exceed US$ 390 billion by 2030 and over 85% of global central banks actively deploying AI analytics.

| Key Insights | Details |

|---|---|

|

Data Center Chip Market Size (2026E) |

US$ 35.2 Bn |

|

Market Value Forecast (2033F) |

US$ 97.1Bn |

|

Projected Growth (CAGR 2026 to 2033) |

15.6% |

|

Historical Market Growth (CAGR 2019 to 2024) |

12.4% |

Market Dynamics

Drivers - Accelerating AI and Large Language Model Workloads Create Unprecedented Demand for Specialized Compute Chips

Generative AI and large language models have fundamentally restructured procurement priorities in the data center chip market, driving hyperscalers and enterprises to deploy massive quantities of AI accelerators and supporting memory architectures at an unprecedented pace. According to Research, GPUs alone generated US$ 28.5 billion, representing 87.6% of the record US$ 32.6 billion in AI data center chipset quarterly revenue in Q4 2024, with a quarter-over-quarter growth rate of 24.8%. NVIDIA dominated this segment with approximately 85% AI accelerator market share. NVIDIA's full-year data center revenue for fiscal year 2025 reached US$ 115.2 billion, more than doubling from the prior year, with approximately 40% of that revenue attributed specifically to AI inferencing workloads.

Government Policy and Hyperscale Capital Commitments Structurally Anchor Chip Infrastructure Investment

National semiconductor strategies and hyperscale capital expenditure programs are embedding long-horizon demand floors in the data center chip market, reducing cyclicality and underwriting sustained procurement of advanced processor, memory, and networking chips. The US CHIPS and Science Act allocated US$ 52.7 billion toward domestic semiconductor manufacturing and research, with US$ 39 billion in direct manufacturing subsidies. Under this program, Intel received US$ 8.5 billion, TSMC received US$ 6.6 billion, Samsung received US$ 6.4 billion, and Micron Technology received US$ 6.1 billion in grants, each tied to new US-based advanced semiconductor fabrication facilities. The European Chips Act committed €43 billion in total public and private investment. Concurrently, the Stargate initiative a joint venture involving OpenAI and Microsoft targets US$ 500 billion in AI infrastructure investment, directly anchoring long-duration chip supply agreements.

Restraints - US Export Controls Structurally Limit the Addressable Market and Require Costly Product Differentiation

The US Bureau of Industry and Security (BIS) has progressively tightened export restrictions on advanced semiconductor chips under the Export Administration Regulations (EAR), preventing the sale of NVIDIA's highest-performance AI accelerators to customers in certain countries and requiring the development of downgraded product variants. In 2025, additional licensing requirements were extended to over 120 countries, directly excluding portions of potential global demand from the data center chip market and compelling manufacturers to maintain parallel product development roadmaps, thereby increasing R&D expenditure without commensurate revenue return. These restrictions, while motivated by national security objectives, structurally constrain the total addressable volume of highest-margin advanced AI chips.

HBM Supply Bottlenecks and Advanced Packaging Constraints Slow Data Center Deployment Timelines

High Bandwidth Memory (HBM) is an irreplaceable component of AI accelerators, yet supply has consistently lagged deployment demand. Samsung and SK Hynix, the two dominant HBM producers, announced in January 2026 that they are pursuing aggressive capacity expansions: Samsung targeting a 50% increase in HBM production capacity in 2026, and SK Hynix committing to investment more than four times its previously stated figure. Despite these measures, lead times for HBM remain extended, with letters of intent for the Stargate project alone covering up to 900,000 DRAM wafers per month from both suppliers by 2029. Advanced CoWoS packaging capacity at TSMC represents an additional bottleneck, constraining the speed at which AI chips can be assembled and shipped, effectively creating deployment lags that moderate the short-term revenue velocity of the data center chip market.

Opportunities - Custom AI ASIC Development by Hyperscalers Opens a New Commercial Tier in the Data Center Chip Market

The accelerating trend of hyperscalers designing proprietary Application-Specific Integrated Circuits (ASICs) for AI training and inferencing creates a major opportunity tier within the data center chip market and is reshaping the competitive structure of the Data Center Transformation Market. Google's Tensor Processing Units (TPUs), Amazon Web Services' Trainium and Inferentia, Microsoft's Maia 100, and Meta's MTIA chips are commercially deployed at scale and optimized for specific AI model architectures, delivering superior cost-per-inference at hyperscale versus merchant GPU alternatives. According to Omdia, AI ASIC revenue is forecast to reach US$84.5 billion by 2030, growing from a small, single-digit base in 2022. For Arm Limited, whose chip architecture underlies a significant proportion of custom ASIC designs, this trend translates directly into licensing and royalty revenue growth with minimal capital expenditure. For EDA software vendors, chip packaging specialists, and advanced foundries such as TSMC and Samsung Foundry, the complexity of ASIC production creates high-margin, long-duration engagements.

Healthcare and BFSI AI Workloads Provide Durable, Compliance-Anchored Demand for Specialized Data Center Chips

Healthcare and BFSI represent two of the highest-value and most structurally durable demand verticals for data center chips, distinguished from consumer AI applications by their regulatory compliance requirements, real-time processing demands, and sensitivity to data residency mandates. AI diagnostic imaging, genomics sequencing, real-time fraud detection, high-frequency trading, and central bank payment analytics all require dedicated, high-performance computing with deterministic latency profiles.

The Bank for International Settlements (BIS) has documented that over 85% of global central banks are actively deploying AI in financial supervision and payment analytics, creating institutional data center chip demand insulated from consumer sentiment cycles. The US healthcare IT sector is projected to exceed US$ 390 billion by 2030, driven by AI-powered diagnostics, electronic health record consolidation, and precision medicine platforms.

Category-wise Analysis

Component Insights

Graphics Processing Units (GPUs) lead the Component category of the data center chip market, accounting for approximately 57% of total component segment revenue in 2026. GPUs' massively parallel architecture, capable of executing thousands of floating-point operations simultaneously, is uniquely aligned with the matrix multiplication operations central to neural network training and inferencing, giving them an irreplaceable role in AI infrastructure. Research confirmed that GPU revenue reached US$ 28.5 billion in Q4 2024, constituting 87.6% of total AI data center chipset quarterly sales. NVIDIA maintained approximately 85% AI accelerator market share, while AMD's Instinct series held approximately 6%. NVIDIA's Blackwell architecture, released in late 2024, delivers 30 times faster AI inference than its predecessor, the Hopper generation, further widening the performance gap over alternatives.

Data Center Size Insights

Large data centers dominate the data center size segment accounting for approximately 72% of market revenue in 2026. The fundamental economics of AI infrastructure, which requires racks of hundreds or thousands of tightly networked GPUs operating with sub-microsecond interconnect latency, make hyperscale campuses the only commercially viable deployment environment for frontier AI workloads. The Stargate initiative targets US$ 500 billion in large-scale AI infrastructure, while hyperscalers collectively committed over US$ 390 billion in capital expenditure during 2025, predominantly in gigawatt-scale data center campuses ranging from 500 MW to 1 GW in capacity. Microsoft alone committed US$ 19 billion to Canadian AI infrastructure in Q4 2025. Small and Medium-Sized Data Centers represent the fastest-growing size segment, driven by enterprise edge AI inference, data sovereignty mandates requiring local computers, and latency-sensitive healthcare and retail analytics workloads that cannot be cost-effectively routed to centralized hyperscale facilities.

End-user Insights

The IT and Telecom segment leads the end-user category, accounting for approximately 34% of the data center chip market revenue in 2026. IT and Telecom operators own and operate the majority of global hyperscale capacity, procure chips in the highest volumes, and embed data center chip costs into every dollar of cloud services revenue. Amazon Web Services, Microsoft Azure, and Google Cloud, the three leading cloud platforms, collectively generated over US$ 250 billion in cloud service revenue in 2024, with chip procurement embedded throughout. The International Telecommunication Union (ITU) reports global internet traffic growth at over 20% annually between 2020 and 2024, creating continuous pressure to expand chip-intensive data center capacity. The Healthcare segment is the fastest-growing end-use vertical, propelled by AI diagnostics platforms, genomics processing, real-time patient monitoring, and telemedicine infrastructure that require dedicated high-performance computing in regulated, auditable cloud environments.

Regional Insights

North America Data Center Chip Market Trends

North America leads the global data center chip market, anchored by the United States' concentration of semiconductor design firms, hyperscalers, AI research institutions, and the most comprehensive semiconductor industrial policy of any major economy. NVIDIA, AMD, Intel, Broadcom, Micron Technology, Google, AWS, and Texas Instruments are all US-headquartered, meaning the design, procurement, and deployment of the most advanced data center chips flow primarily through North American institutions. AMD surpassed Intel in data center processor revenue in Q3 2024, reporting US$ 3.549 billion versus US$ 3.3 billion for Intel, marking a historic competitive inflection in the server CPU market. The Semiconductor Industry Association (SIA) documented that the United States accounts for approximately 46% of global semiconductor revenue, providing a structural commercial advantage.

Europe Data Center Chip Market Trends

Europe is repositioning itself as a strategic participant in the Data Center Chip Market, combining comprehensive AI regulation with substantial public investment in compute infrastructure. The EU AI Act, the world's first comprehensive legal framework for artificial intelligence, entered into force on August 1, 2024, with core provisions taking effect on August 2026, structuring AI compute procurement around risk classification and transparency requirements.

The EU AI Continent Action Plan, published in April 2025, established AI Factories, Gigafactories, and the Invest AI Facility to close Europe's data center compute deficit relative to the United States and China. The EU's Digital Europe Programme earmarked €2.1 billion for AI infrastructure from 2021 to 2027. France committed €109 billion to data center and AI infrastructure investment, while a coalition of European companies and investors launched a proposal to mobilize €150 billion for European AI scale-ups over five years, supported by institutions from Deutsche Bank to Mistral AI.

Asia Pacific Data Center Chip Trends

Asia Pacific is the fastest-growing region in the global data center chip market, combining the world's dominant memory chip supply infrastructure with the largest and fastest-expanding data center investment pipeline outside North America. Samsung Electronics and SK Hynix in South Korea produce the overwhelming majority of global HBM supply, with both companies committing to large-scale 2026 capacity expansions, Samsung at +50% HBM output and SK Hynix at more than four times its prior investment commitment. SK Hynix shipped HBM4 samples to major clients including NVIDIA and Broadcom at NVIDIA's GTC 2025 event in March 2025, establishing the supply chain for next-generation AI accelerators.

According to JLL, Asia Pacific attracted over US$ 150 billion in AI data center infrastructure investment, with deployment expected in the second half of 2025, with India, Australia, Japan, and Southeast Asian markets, including Malaysia, Singapore, Thailand, and Indonesia, emerging as strategic investment destinations. Alibaba Group announced US$ 52.7 billion in cloud and AI infrastructure investment, expanding across 29 regions and 87 availability zones. China maintains a substantial domestic chip demand base through its 14th Five-Year Plan mandates for cloud, big data, and AI self-sufficiency, while simultaneously developing domestic AI chip alternatives such as Huawei's Ascend series.

Competitive Landscape

The global data center chip market is tightly consolidated at the AI accelerator tier, operating as a functional oligopoly with NVIDIA holding approximately 85% of AI chipset revenue. AMD, Intel, Broadcom, Samsung, and SK Hynix contest adjacent processor, ASIC, networking, and memory segments. Competitive differentiation strategies center on proprietary AI architectures, software ecosystem lock-in (NVIDIA's CUDA), HBM integration, and foundry partnership depth with TSMC. Emerging business models include hyperscale-designed custom ASICs, GPU-as-a-service cloud delivery, and Arm Limited's IP licensing model, which enables both startups and hyperscalers to develop custom data center silicon. Market leaders are intensifying investments in advanced packaging, photonic interconnects, and liquid cooling-compatible chip designs to address power density constraints at gigawatt-scale facilities.

Key Developments:

- In February 2025, Intel Corporation introduced the Intel Xeon 6 processors, featuring performance cores optimized for delivering top-tier performance in data centers, including up to twice the performance in AI processing. In addition, these new processors, designed for network and edge applications, incorporate Intel vRAN Boost, which enhances capacity by up to 2.4 times for radio access network (RAN) workloads.

- In February 2025, STMicroelectronics introduced a new computer chip designed for the growing AI in data centers developed in partnership with Amazon Web Services (AWS).

- Utilizing photonics technology, the chip relies on light instead of electricity, enhancing speed while reducing power consumption in AI-driven data centers.

Companies Covered in Data Center Chip Market

- NVIDIA Corporation

- Intel Corporation

- Advanced Micro Devices, Inc.

- Micron Technology, Inc.

- Broadcom Inc.

- Samsung Electronics Co., Ltd.

- SK Hynix Inc.

- Arm Limited

- AWS

- Alibaba Group

- Texas Instruments Incorporated

- Other Key Players

Frequently Asked Questions

The global Data Center Chip Market is projected to reach US$ 97.1 Bn by 2033, growing at a CAGR of 15.6% from an estimated US$ 35.2 Bn in 2026. The market recorded a historical CAGR of 12.4% between 2020 and 2025, starting from US$ 17.5 Bn in 2020.

The primary drivers are the explosive adoption of generative AI and large language model workloads with NVIDIA reporting US$ 115.2 billion in data center revenue in fiscal year 2025 and institutionalized capital deployment through the US CHIPS Act (US$ 52.7 billion) and hyperscale commitments exceeding US$ 390 billion in 2025 AI infrastructure investment.

Graphics Processing Units (GPUs) lead the Component segment with approximately 57% market share, confirmed by Research data showing GPUs generating US$ 28.5 billion or 87.6% of total AI chipset quarterly sales in Q4 2024. NVIDIA holds approximately 85% of AI accelerator revenue within this segment.

North America leads, with the US CHIPS Act committing US$ 52.7 billion in semiconductor investment, AMD surpassing Intel in data center processor revenue in Q3 2024, and the Semiconductor Industry Association (SIA) confirming the United States accounts for approximately 46% of global semiconductor revenue.

Leading market players include NVIDIA Corporation, Advanced Micro Devices, Inc., Intel Corporation, Broadcom Inc., Samsung Electronics Co., Ltd., SK Hynix Inc., Micron Technology, Inc., Arm Limited, Google, Amazon Web Services, Alibaba Group, and Texas Instruments Incorporated, among others operating across processors, memory, and networking chip segments.